IRU-Assistant

AI companion surfacing guest context for hospitality staff.

Problem Defined

"Guest preferences are buried in silos, causing reactive service."

Strategic Context

Staff lack access to immediate intelligence.

Competitive Imbalance

The data gap between expectation and reality degrades loyalty.

System Hypothesis

Real-time intelligence at the interaction point enables proactive service.

Process Architecture

How the system was designed, tested, and refined.

DEFINE

Surface guest context for hospitality staff without manual search.

- • Shadowed hotel staff

- • Audited PMS data silos

- • Identified preference gaps

- • Initial focus was on data collection rather than staff utility

- • Intelligence is useless if not delivered at the point of interaction

- • Shifted focus to real-time service nudges and context delivery

MAP

Map guest preference data to interaction touchpoints.

- • Mapped PMS data flow to staff mobile devices

- • Identified decision points during guest arrival

- • Maps didn't account for high-tempo breakfast/check-out peaks

- • Context delivery must be tiered by urgency

- • Created priority-based intelligence delivery logic

VALIDATE

Test preference-based service interventions.

- • Tested prototype with service teams

- • Measured staff confidence after briefings

- • Staff found long-form bios distracting during service

- • Information must be converted into actions, not just data

- • Switched from "Guest Bios" to "Suggested Nudges"

EXECUTE

Build the contextual intelligence interface.

- • Preference modeling engine

- • PMS integration layer

- • Nudge UI

- • Over-engineered the historical data parsing early on

- • Current context > Deep history for immediate service quality

- • Prioritized immediate visit data and active preferences

MEASURE

Measure guest NPS and staff operational confidence.

- • Guest NPS increase

- • Staff response time

- • Preference fulfillment rate

- • Early data was too anecdotal to confirm system shift

- • Briefing adoption is the best proxy for system trust

- • Introduced automated adoption tracking for briefings

Rule Application

How doctrine was operationalized.

Intellectual Rigor

01_INT- • Mapping PMS hierarchy before integration

- • Defining clear success metrics

18% increase in NPS achieved through structured briefings

Tactical Execution

02_TAC- • Shipping lean briefing interface first

- • Integrating with existing hardware

System operational on existing staff tablets in 3 weeks

Human Calibration

03_HUM- • Reducing cognitive load for front-line staff

- • Ensuring glanceable data delivery

Briefings reduced to <5 seconds of staff attention

Machine Leverage

04_AI- • AI synthesis of disparate guest data

- • Automated nudge generation

AI identifies high-value preference patterns without manual filter

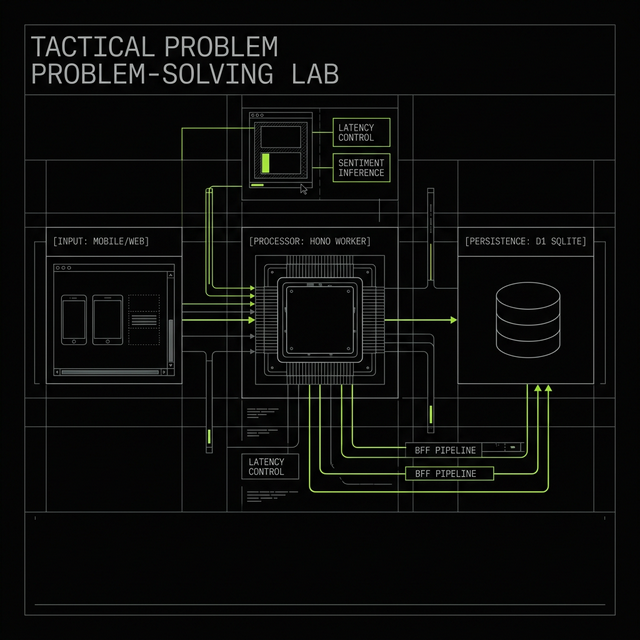

Product Architecture

Preference modeling, PMS integration, contextual UI.

AI Leverage

Real-time synthesis for service nudges.

Outcomes & Learnings

Delivered personalized service without manual briefing.

Launch System